Table of Contents

Home / Blog / AI/ML

How to Build a Generative AI Audio Model?

April 2, 2025

April 2, 2025

Generative AI audio models revolutionized our possibilities to produce and handle sounds in new ways. Through deep learning technology, these natural models produce authentic speech and music along with sound effects to benefit applications in media companies, game developers, and virtual assistant programs. Artificial intelligence allows developers to produce a sophisticated sound output that reproduces authentic human voices and combines authentic environmental noises while generating musical compositions.

People seeking to build generative AI audio models must possess expertise in machine learning techniques alongside neural networks as well as audio signal processing fundamentals. This paper demonstrates how to generate an audio model, which includes essential stages from data assembly and model choice through training to architectural implementation. This article will also explore various generative audio models along with current generative AI trends before analyzing the contribution of AI development companies toward future AI evolution.

Ready to Build Your Own Generative AI Audio Model?

Unlock AI-powered audio solutions with Debut Infotech. We specialize in text-to-speech models, AI-generated music, and voice cloning to elevate your business.

Generative AI Models and Their Types

The purpose of generative AI models is to produce fresh, high-quality, human-like information that resembles human-generated content. Deep learning techniques enable these models to analyze patterns and learn from existing datasets to create authentic outputs, including text documentation images and sound. The breakthroughs in generative AI audio models permit users to synthesize human-like speech and produce realistic musical works, as well as generate personalized sound effects.

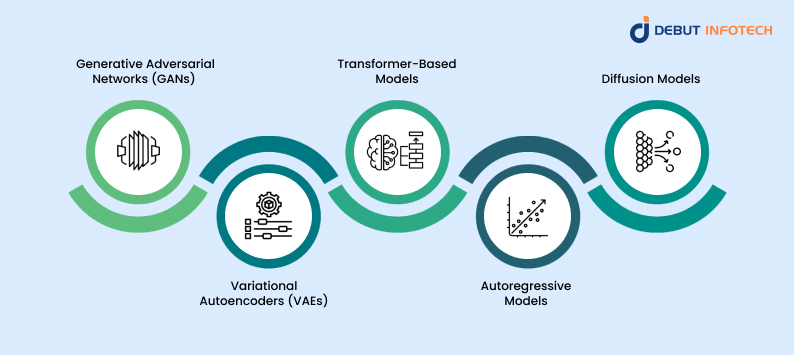

Types of Generative AI Models

The growing interest in generative AI frameworks has led to the development of several types of models, each suited for different applications. Below are the most commonly used generative AI models and how they function.

1. Generative Adversarial Networks (GANs)

Generative Adversarial Networks, or GANs, use a competitive learning process involving two neural networks:

- The Generator: Produces new data based on the training dataset.

- The Discriminator: Evaluates the authenticity of the generated data.

The generator continuously improves its output to make the discriminator recognize fake data as real data. GANs have transformed generative AI development companies by allowing them to generate highly realistic voice samples, music compositions, and synthetic background noises. The GANs in audio AI generators enable the creation of human-like speech that reproduces genuine vocal tones and authentic accents.

GANs demonstrate their useful function in audio-to-audio models through their audio conversion capabilities, rephrasing sounds between whisper to normal speech and instrumental to vocal. Future audio-generative trends will heavily depend on models that demonstrate continuous development.

2. Variational Autoencoders (VAEs)

Variational Autoencoders (VAEs) function as deep learning systems that decrease data dimensions before returning them to their original form. VAEs distinguish themselves from general autoencoders through their implementation of controlled randomness, which produces new and various output results.

Text-to-speech models achieve the creation of distinct voice styles and sound effects through VAEs by processing substantial datasets. VAEs help adaptive AI development by allowing models to adjust their voice characteristics automatically according to different user inputs. VAEs serve to enhance the process of generating AI audio that is natural-sounding and expressive.

The main advantage of VAEs in generative AI models is their capability to produce multiple audio variations of the same input, which maintains the fundamental qualities. VAEs’ capability to develop multiple variants of speech outputs makes them essential for AI assistant development and AI Copilot systems because realistic speech diversity is necessary.

3. Transformer-Based Models

Transformers establish themselves as the leading technology for AI research when dealing with sequential data processing. GPT and BERT, along with other models, utilize self-attention mechanisms to process complex sequences, which results in high effectiveness when operating in conversational AI systems and AI assistant and agent evaluations.

Transformers boost the NLP capabilities of AI development companies when they integrate generative AI integration services. Modern AI assistants and AI virtual assistants obtain their capabilities through these models, which allows them to recognize context and deliver intelligent responses in addition to speaking like humans.

Transformer-based models operate with AI algorithms to enhance generative adversarial networks, thus producing more efficient, high-quality speech synthesis and voice cloning. The models are actively employed by hiring generative AI developers to handle projects requiring sophisticated AI-based audio generation capabilities.

4. Autoregressive Models

Autoregressive models generate data by predicting one element at a time based on previous inputs. These models are useful in applications such as:

- Audio-to-audio models: Transforming existing audio into new formats, such as converting a spoken sentence into a song.

- Generative AI frameworks: Creating dynamic, real-time voice synthesis for interactive AI applications.

- AI Copilot systems: Enhancing AI-powered assistants by generating realistic speech responses.

In generative AI development, autoregressive models contribute to the advancement of text-to-speech models, improving their ability to deliver smooth and coherent speech. These models are widely used in the entertainment industry, voice cloning, and generative AI consulting services for customized AI speech applications.

5. Diffusion Models

Diffusion models represent a new wave in generative AI trends, particularly in image and audio generation. These models work by transforming random noise into structured data through iterative steps.

While still in the early stages of development for generative audio, diffusion models show promise in generating high-fidelity soundscapes, ultra-realistic speech, and music synthesis. In generative AI development companies, researchers are exploring their applications in audio AI generators and AI agent development companies for next-generation audio synthesis.

By choosing the right generative AI frameworks, developers can build models that cater to specific applications.

Steps to Build a Generative Audio Model

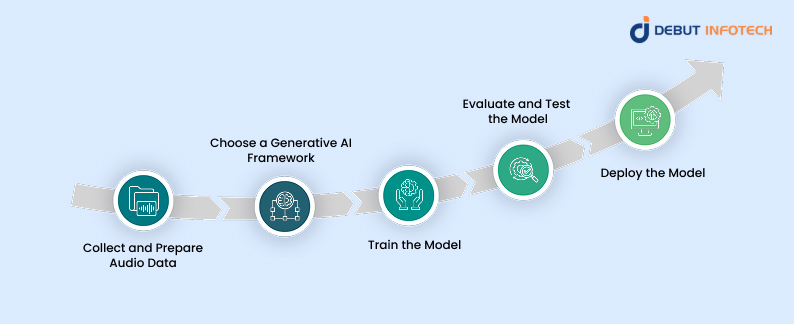

Creating a generative audio model involves multiple stages, from data collection to model training and deployment. Below is a step-by-step guide to the process.

1. Collect and Prepare Audio Data

A high-quality dataset is the foundation of any AI model. The better the training data, the more accurate the generated audio will be. Steps include:

- Data Collection: Gather relevant audio samples, such as human speech, instrument sounds, or environmental noises.

- Preprocessing: Convert all files to a consistent format (e.g., WAV, MP3) and sample rate.

- Feature Extraction: Use spectrograms, MFCCs (Mel-frequency cepstral coefficients), or waveforms to represent the audio data in a machine-readable format.

2. Choose a Generative AI Framework

To build a high-performing audio model, selecting the right AI framework is essential. Some widely used generative AI frameworks include:

- TensorFlow and PyTorch – Popular deep learning libraries for training audio models.

- WaveGAN – A generative adversarial network (GAN) designed for raw waveform generation.

- Tacotron and WaveNet – Text-to-speech models used for speech synthesis.

- MelGAN – A model optimized for fast and high-quality speech generation.

Each framework offers unique advantages, and the choice depends on the complexity of the audio AI generator being developed.

3. Train the Model

Once the dataset is prepared and a framework is selected, the next step is training the generative AI audio model. This process involves:

- Choosing an Architecture: Options include generative adversarial networks (GANs), recurrent neural networks (RNNs), and transformer-based models.

- Training the Model: The model learns patterns in audio data using supervised or unsupervised learning.

- Optimizing with Loss Functions: Metrics such as Mean Squared Error (MSE) or Perceptual Loss help improve accuracy.

- Fine-Tuning: Adjust hyperparameters and retrain the model for better performance.

Training a generative audio model requires substantial computational resources, making it beneficial to work with a generative AI development company or hire generative AI developers for professional assistance.

4. Evaluate and Test the Model

Before deployment, the model’s output must be evaluated for quality and realism. This step includes:

- Listening Tests: Assess the clarity and naturalness of generated audio.

- Spectrogram Analysis: Compare the spectrograms of generated and real audio samples.

- Performance Metrics: Use objective evaluation methods such as signal-to-noise ratio (SNR) and Word Error Rate (WER).

Testing ensures that the model meets the required quality standards and performs well across various use cases.

5. Deploy the Model

Once trained and validated, the generative audio model can be integrated into applications using APIs or cloud-based services. Deployment options include:

- On-Premises Deployment: Running the model on local hardware for complete control.

- Cloud Deployment: Using platforms like AWS, Google Cloud, or Azure for scalability.

- Edge AI Deployment: Optimizing the model for mobile devices and embedded systems.

Companies offering Generative AI Integration Services can assist in deploying models for real-world applications.

Use Cases of Generative Audio Models

Generative audio models have transformed the way sound is created and processed across multiple industries. These AI-powered systems analyze and generate realistic audio by mimicking human speech, music, and environmental sounds. Businesses use enhanced generative AI frameworks to incorporate generative AI services to develop new applications. Below are some of the most impactful use cases of generative AI audio models.

1. Virtual Assistants & AI Chatbots

Generative AI models play a crucial role in AI assistants and AI virtual assistants, enhancing their ability to interact with users naturally. Companies specializing in generative AI development use these models to improve conversational AI, enabling virtual assistants to generate human-like responses with natural intonation and emotion.

Many businesses use AI-powered voice assistants for:

- Customer support – Automating responses in call centers and chatbots.

- Personal assistants – AI-driven tools like Siri, Alexa, and Google Assistant.

- Multilingual communication – Instant translation and voice adaptation for different languages.

The continuous improvement of text-to-speech models has made these assistants more realistic, reducing robotic-sounding speech and making interactions more seamless.

2. Music Composition and Sound Design

Generative AI is reshaping the music industry by enabling AI-powered tools to create unique compositions. By analyzing musical patterns and structures, AI algorithms generate original music pieces, often indistinguishable from those made by human composers.

Popular applications of AI in music include:

- AI-assisted composition – Platforms like OpenAI’s Jukebox generate complete songs.

- Soundtrack creation – AI tools develop adaptive background music for movies and games.

- Personalized music generation – AI adjusts music based on user preferences.

With continued advancements in generative AI models, AI-generated music is being used by artists, content creators, and filmmakers to enhance storytelling and engagement.

3. Voice Cloning & Dubbing

Generative AI audio models are widely used in voice cloning, enabling the creation of synthetic voices that sound nearly identical to human speech. This technology is valuable in industries such as:

- Film and animation – Dubbing movies and TV shows into different languages with AI-generated voices.

- Audiobooks – Creating realistic AI-narrated audiobooks with different voice styles.

- Gaming – Generating voiceovers for characters without hiring voice actors.

Using audio AI generators, companies can synthesize realistic voices quickly and at a lower AI development cost, making professional-grade dubbing and narration more accessible.

4. Speech Enhancement & Noise Reduction

Generative AI is also being used to enhance recorded audio quality by reducing background noise, correcting speech distortions, and improving clarity. This is particularly useful in:

- Call centers – Filtering out noise for clearer customer interactions.

- Podcasting & broadcasting – Enhancing voice recordings to sound more professional.

- Hearing aid technology – Improving speech comprehension for those with hearing impairments.

With adaptive AI development, speech enhancement models can be fine-tuned for different environments, making them indispensable for audio processing applications.

5. Gaming & Virtual Reality (VR)

In the gaming and VR industry, generative AI creates immersive soundscapes that enhance user experience. AI-generated sounds are increasingly replacing pre-recorded audio to provide dynamic and interactive environments.

Applications include:

- AI-generated voiceovers – Game characters with unique, AI-driven dialogue.

- Dynamic sound effects – Sounds that adapt to in-game events in real-time.

- Realistic 3D audio – Spatial sound positioning for enhanced VR experiences.

Developers working with AI agent development companies use these models to create lifelike audio that responds dynamically to user actions, making gaming and VR environments more engaging.

6. Healthcare & Accessibility Solutions

Generative AI audio models are being adopted in the healthcare sector to improve accessibility for individuals with speech or hearing impairments. AI-generated speech technology is used in:

- Speech therapy – AI-generated voices help individuals improve their pronunciation.

- Voice prosthetics – Creating natural-sounding synthetic voices for those who have lost their ability to speak.

- Assistive reading tools – Text-to-speech models for visually impaired individuals.

By working with AI development companies, healthcare providers can integrate AI-driven speech solutions to improve patient care and accessibility.

7. Marketing & Advertising

Marketers use AI-generated voices for audio advertisements, podcasts, and personalized content. Generative AI consultants help brands create engaging audio experiences that capture audience attention.

Some use cases in marketing include:

- AI-generated voiceovers for ads – Creating high-quality voice narrations for commercials.

- Personalized audio messages – Customizing marketing campaigns with AI-driven speech.

- Synthetic brand voices – Companies using AI to develop unique digital brand personas.

As AI technology advances, how to generate AI audio will become even more refined, offering businesses new ways to engage their audiences through sound.

The Future of Generative Audio

Adaptive AI development continues to advance, leading to more sophisticated and realistic generative AI models. Future trends include:

- Improved Speech Realism: More natural and expressive text-to-speech models.

- Cross-Modal AI: Combining audio with visual and textual data for enhanced multimedia experiences.

- Personalized AI Voices: Custom AI voices for individual users and brands.

- Low-Latency Audio Synthesis: Faster and more efficient audio-to-audio models.

With these innovations, businesses increasingly turn to AI development companies and generative AI consultants to explore new opportunities in AI-driven audio development.

Let’s Discuss Your AI Project

Want to integrate generative AI audio models into your business? Debut Infotech offers AI consulting services, from generative AI frameworks to adaptive AI development, tailored to your needs.

Conclusion

Building a generative AI audio model requires careful planning, from data collection to model selection and deployment. By using the right generative AI frameworks and leveraging advancements in generative adversarial networks, developers can create highly realistic speech, music, and sound effects.

Partnering with a generative AI development company can accelerate the process for businesses looking to integrate AI-driven audio solutions. If you’re interested in AI-powered audio generation, Debut Infotech offers expert AI development services to help you stay ahead in this rapidly evolving field.

Frequently Asked Questions

A. A generative AI audio model is an AI system that creates or modifies sound based on learned patterns. These models generate realistic speech, music, or sound effects using deep learning techniques like generative adversarial networks (GANs) and text-to-speech models.

A. Generative AI audio models analyze vast amounts of audio data and use AI algorithms to generate new sounds that resemble human speech, music, or environmental noise. Some models convert text to speech, while others create new compositions or enhance existing audio.

A. Generative audio models are widely used in:

– AI virtual assistants and chatbots for realistic voice interactions.

– Music composition, creating original songs or soundtracks.

– Voice cloning, replicating human voices for media and entertainment.

– Speech enhancement, improving audio clarity by reducing noise.

– Gaming & VR, generating immersive sound effects.

A. With advances in generative AI trends, AI-generated voices are becoming more natural and expressive. Modern audio AI generators, including tone, pitch, and emotion, can mimic human speech. While some minor differences remain, AI-generated voices are increasingly indistinguishable from human voices.

A. An audio model generates sound from scratch based on trained data, such as text-to-speech models that convert text into spoken words. An audio-to-audio model modifies existing sounds, such as enhancing speech clarity, changing voices, or transforming one type of sound into another.

A. The AI development cost depends on the complexity of the model, the required dataset, and the level of customization. Working with a specialized AI development company or generative AI development companies ensures better results but can increase costs.

A. Businesses can integrate generative AI audio by working with generative AI consultants or hiring generative AI developers. They can develop custom AI models for voice assistants, automated customer service, interactive media, and more. Partnering with an AI agent development company can also help create AI-driven voice applications tailored to business needs.

Talk With Our Expert

Our Latest Insights

USA

2102 Linden LN, Palatine, IL 60067

+1-703-537-5009

[email protected]

UK

Debut Infotech Pvt Ltd

7 Pound Close, Yarnton, Oxfordshire, OX51QG

+44-770-304-0079

[email protected]

Canada

Debut Infotech Pvt Ltd

326 Parkvale Drive, Kitchener, ON N2R1Y7

+1-703-537-5009

[email protected]

INDIA

Debut Infotech Pvt Ltd

C-204, Ground floor, Industrial Area Phase 8B, Mohali, PB 160055

9888402396

[email protected]

Leave a Comment